5 Research Questions and Aims

Give yourself a pat on the back. Go ahead. No one is watching. via GIPHY

If you’re following the plan I’ve outlined so far, you’ve been seeking out research ideas by attending presentations, talking with fellow students and mentors, skimming interesting journals, searching research databases like PubMed for keywords you’ve identified, and finding relevant systematic reviews and meta-analyses. You’ve also been developing your critical appraisal skills and, in the process, have been taking note of gaps in our knowledge. All of this work is leading you to identify potential research problems worth solving. The next step is to take a broad research problem and narrow your focus to a more specific research question and develop study aims and (potentially) hypotheses. I’ll walk you through the process and showcase the lifecycle of a good research question.

5.1 Types of research questions

There are three basic types of research questions we can ask (Hernán, Hsu, and Healy 2019):

- Descriptive

- Predictive/Relational

- Causal (counterfactual prediction)

This framework is an attempt to simplify the world to help you learn, but you will soon see that the lines between these three categories can blur. For one, a study that aims to assess the evidence for a claim that X causes Y can include elements of prediction and description. Second, answering questions of all three types can involve statistical inference, as we often want to quantify the uncertainty in our estimates. So there is a possibility of conflating our aims (e.g., to estimate the causal effect of X on Y) and methods (e.g., the use of a statistical test to examine the association—a relationship—between X and Y) (Hernán 2018). Nevertheless, it is helpful to erect some boundaries to introduce these concepts and let you decide if they are useful as you gain more expertise.

5.1.1 DESCRIPTIVE

Every study uses an element of description. Let’s say you recruit a sample of 100 people who suffer from the same disorder and conduct a trial to estimate the effect of a new drug on some clinical outcome. When you summarize what you know about these 100 people at the time they were recruited, for instance the average age of the group, you’re describing the sample. Descriptive summaries appear in nearly every research article. But we can distinguish between the use of descriptive statistics—e.g., what is the mean age of these 100 people, the sample—and descriptive research questions.

One common descriptive research question in global health follows this format:

What percentage of women of reproductive age in Nepal use a modern method of contraception?

As we will discuss later in the book, you could answer this question by conducting a survey of contraceptive behavior with a representative sample of women in Nepal. That’s what the DHS Program did in 2010 (Ministry of Health and Population, New ERA, and ICF International Inc. 2011).

“Modern methods” like condoms, implants, pills, etc, are distinguished from (and are more effective than) “traditional methods” such as withdrawal and the rhythm method.

Researchers surveyed a random sample of 10,826 households across the country and interviewed 12,674 women between the ages of 15 and 49 about their health behaviors and preferences. They estimated that 43.2% of married women reported using some modern method of contraception.

.](images/dhsnepalmm.png)

Figure 5.1: Current use of contraception by age in Nepal. Source: DHS Nepal 2011, https://tinyurl.com/y4u5wfkv.

Of course this is what they learned from the sample, but the research question required inference to the all women in Nepal in this demographic (the target population). As you’ll learn in Chapter 13, there is some error involved in speaking with some but not all women in Nepal, and the researchers estimated that the true percentage probably ranged from 41.0% to 45.3%.1 I’m being a bit fast and loose with the interpretation of this confidence (or uncertainty) interval, but I’ll make up for it later. This is an example of descriptive inference to answer a descriptive research question.

5.1.2 PREDICTIVE/RELATIONAL

Of course not everyone needs to be using modern methods of contraception. If you’re not sexually active, you’re not at risk for pregnancy. Or if you’re trying to get pregant, modern methods will make that challenging. Therefore, public health officials wanting to promote modern method use would take this indicator and combine it with several others in the dateset to estimate the “unmet need” for family planning: women who say that they want to prevent or delay pregnancy, but are not using contraception.

Description is essential to science and decision-making related to needs and resources. The result from Nepal suggests that more than half of married women of reproductive age were not using a modern method of contraception in 2010. This is a very useful thing to know if you work for the Ministry of Health and are concerned about promoting reproductive health.

But you probably also want to go the next step and ask, “What predicts modern method use?” Stated differently, what factors are associated with/correlated with/related to modern method use? Who is most likely to use modern methods? What are the barriers to modern method use? These are questions about the strength and direction of the relationship between two or more variables and represent our second category of research questions.

.](images/modernprob.png) Figure 5.2: Predicted probabilities of use of modern method of contraception. Source: Yours truly using data from the DHS Nepal 2011 survey, https://tinyurl.com/y4u5wfkv.

Figure 5.2: Predicted probabilities of use of modern method of contraception. Source: Yours truly using data from the DHS Nepal 2011 survey, https://tinyurl.com/y4u5wfkv.

If you inspect the above figure from Nepal once again, you will see that the cross-tabulation of any modern method by age shows that modern method use is more common among older women compared to younger women. Modern method use appears to increase with age, according to this simple descriptive summary.

So we might want to ask, “To what extent does age predict self-reported modern method usage among currently married women of reproductive age in Nepal?” We can answer this question with a statistical model called logistic regression. The figure shows the predicted probabilities of modern method use by age among currently married women. As we expected, modern method use is more common among older women.

5.1.3 CAUSAL

Figure 5.3: Unfortunately, no one can be told what the Matrix is. You have to see it for yourself. This is your last chance. After this there is no turning back. You take the blue pill, the story ends, you wake up in your bed and believe whatever you want to believe. You take the red pill, you stay in Wonderland, and I show you how deep the rabbit hole goes…

Figure 5.3: Unfortunately, no one can be told what the Matrix is. You have to see it for yourself. This is your last chance. After this there is no turning back. You take the blue pill, the story ends, you wake up in your bed and believe whatever you want to believe. You take the red pill, you stay in Wonderland, and I show you how deep the rabbit hole goes…

Questions about the relationship between X and Y make up the bulk of literature in global health. However, sometimes we want to go beyond asking to what degree X and Y are related and ask, “Does X cause Y?”, or “What is the causal effect of X on Y?” These are causal questions. They reflect our desire to know whether our treatments, policies, and programs promote behavior change and make people healthier. I’ll introduce you to this topic of causal inference in more detail in Chapter 8, but let’s consider for a moment the nature of a causal question.

To answer a causal question, we must think “what if”. If you want to determine whether a new drug helps people recover faster from a disease, a fundamental problem you’ll face is that you can’t give this new drug and the old drug to the same person simultaneously. Once this person goes down the new drug path, they can’t go down the old drug path (at the same time). So we have to create a situation where we can ask, “What would have happened if this person had gone down the old drug path instead?” This alternate path is known as the counterfactual, and we’ll discuss it much more depth as we go. For now, I’ll simply describe it as the path not taken.

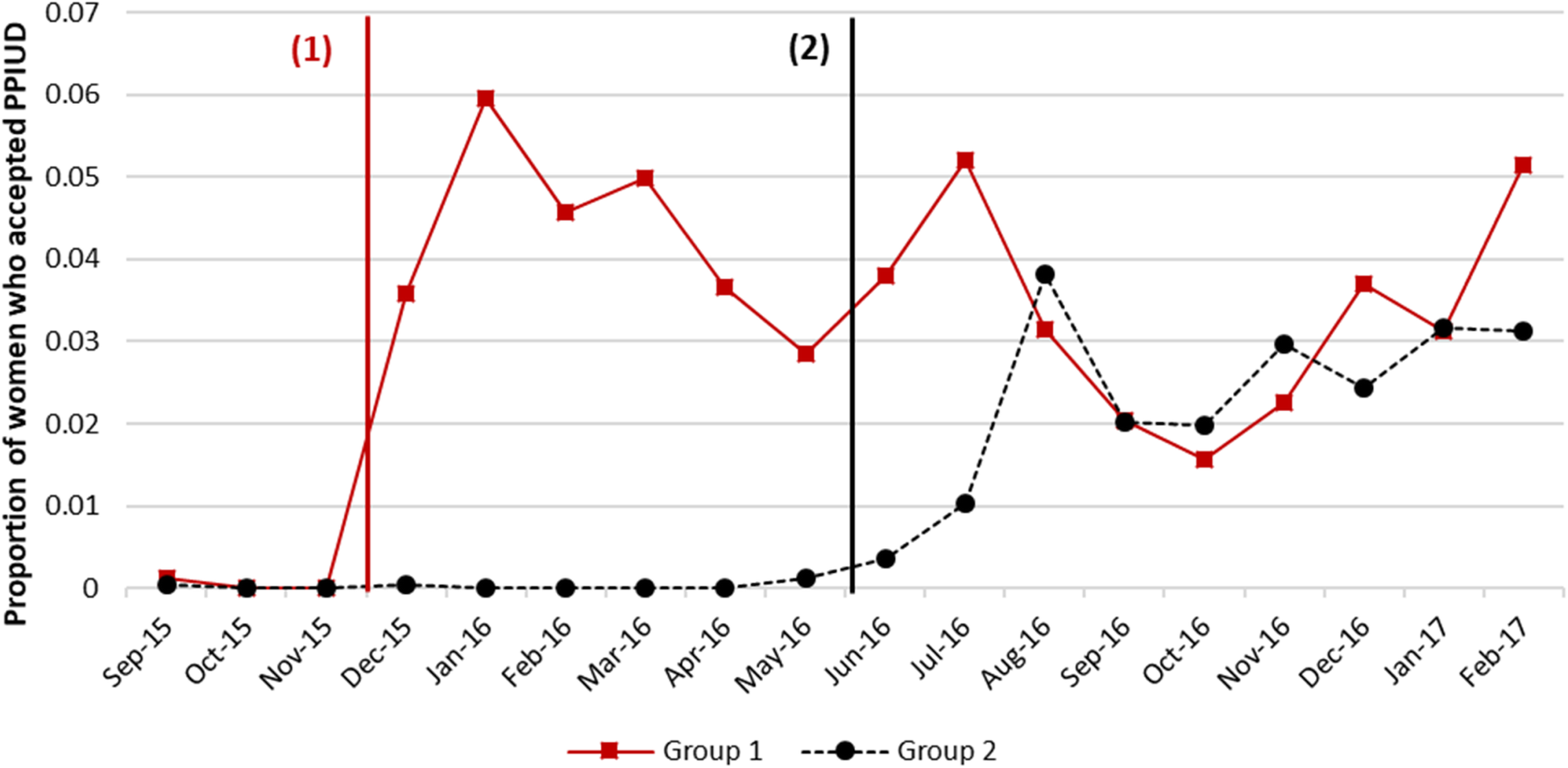

Research in low-income countries has estimated that nearly all women want to prevent or space their next pregnancy after they have just given birth, but 6 in 10 women do not start a method of family planning after delivering a baby. Precisely when women become fertile after childbirth varies and is influenced by factors like breastfeeding, but in most cases ovulation returns before family planning is started. This puts women at risk of getting pregnant again before they want to. One option is to have an IUD inserted immediately after delivery of the placenta or within the first month postpartum.

Consider another example from Nepal. Pradhan et al. (2019) looked at the path not taken for counseling women on the benefits of having an intra-uterine device (IUD) inserted following childbirth to prevent a new pregnancy. The authors used a stepped-wedge design that you’ll meet in Chapter 9 to estimate the causal effect of offering postpartum family planning counseling on the proportion of new moms who opt to have an IUD inserted. By this design, the counseling intervention was ‘turned on’ in six hospitals in a stepwise fashion. The three hospitals in Group 1 received the intervention first in a staggered start. Approximately six months later, the remaining hospitals began offering the counseling intervention, one after the other. This figure demonstrates that rates of uptake appeared to jump in each group after the intervention was introduced. Pradhan et al. (2019) estimated that the intervention increased IUD uptake by 4.4 percentage points [95%CI: 2.8–6.4 pp].

Figure 5.4: Trends in PPIUD uptake. Source: Pradhan et al. (2019).

On the surface, questions of causal inference (or counterfactual prediction if you prefer) resemble questions of prediction/relation.2 See this discussion on Andrew Gelman’s blog where he frames causal models as a special case of predictive models. For X to cause Y, it must be true that X is related to or associated with Y.

What distinguishes these questions is often the research design we use to find answers, so that is where we will spend our time. And it will be time well-spent. Causal inference is one of the most important topics for applied global health researchers. When it comes to making decisions about prevention and treatment and how to allocate resources, we want to know what works, why, and for whom.

5.2 Specifying your research question

Need to develop a good qualitative research question? See this short video by Yale University (2015). Fundamentals of Qualitative Research Methods: Developing a Qualitative Research Question (Module 2). Source: https://tinyurl.com/y5sexqwg.

A good research question is a specific research question. I’ll share two mneumonics to help you get specific: FINER and PICO.

Imagine that we wanted to study the uptake or use of bed nets. We could ask a descriptive research question like, “How many children sleep under bed nets?” It’s a good start, but this question is too general. Children of what age? Living where? We also need to define what we mean by sleeping under a bed net. In this line of research, it is common to ask about the previous night, as in the night before the survey.

A better way to phrase the question is, “What percentage of children under 5 years of age in Kenya slept under an insecticide treated net the previous night?” An example of a predictive research question on the same topic is, “What are the predictors of the use of insecticide treated net among children under 5 years of age in Kenya?”

Both of these examples are FINER than the first one: Feasible, Interesting, Novel, Ethical, and Relevant (Hulley, Newman, and Cummings 2007).

| Feasible | Some resarch questions will take a long time to answer, cost too much, require too many participants, require skills or equipment that you do not have, or will be too complex to implement. |

| Interesting | Research requires funding and effort. If you do not ask a sufficiently interesting question, you will not get funding. If you manage to get funding but lose interest in the question, you might not finish. Unlike other domains, global health research tends to have long timelines, and it’s important to work on things you will find interesting over the long term. |

| Novel | Replication is an important part of science, but the majority of funding goes to research that asks new and interesting questions. |

| Ethical | It would be very interesting to create a prison simulation to determine whether charactristics of the people or situation cause abusive behavior, but this would not be ethical because it could lead to the harmful treatment of research subjects. Right? |

| Relevant | In addition to being interesting, a research question should also be relevant. The answer should move the field forward in some way. Making this determination requires a thorough review of the literature and conversations with senior colleagues. |

The second pneumonic is PICO, which I introduced in Chapter 3. In clinical research, good clinical questions always include PICO: Patient/Population/Problem, Intervention/Prognostic factor/Exposure, Comparison, and Outcome.

| P | Patient, Population, or Problem |

| I | Intervention, Prognostic Factor, or Exposure |

| C | Comparison |

| O | Outcome |

Let’s use PICO to develop a research question about the efficacy of mosquito bed nets in preventing malaria. The problem is malaria infections. The population is children under 5 years of age. Because intervention studies tend to be smaller in reach than nationally representative surveys, we might add “living around the Lake Victoria basin in Kenya”. The intervention is the application of an insecticide-treated net. The comparison group might be children living in families who are provided an untreated bed net.3 Prognostic factor refers to covariates that could influence the prognosis of the patient. An exposure would be something that we think might increase the risk of an outcome. One outcome measure could be the rate of parasitaemia after the intervention.

We combine all of these elements into a single research question:

Among children under 5 years of age living around the Lake Victoria basin in Kenya, are insecticide-treated mosquito nets more effective than untreated nets at preventing parasitaemia?

5.3 Outlining research aims

Your research question should drive your overall study objectives, known in some circles as specific aims. Aims are the work products you will complete within a given project period. “Prevent malaria” is not an aim. “Cure cancer” is not an aim. These might be the goals that motivate you to come to work every day, but they do not describe the work you propose to complete as part of a specific project.

For some formative work (especially studies that use qualitative methods), you might propose to describe some phenomenon. This will often be seen as a weak aim for larger grant proposals. Reviewers might expect you to have already completed this formative work and discuss it under preliminary evidence.

When formulating aims, the typical advice is to use strong verbs, such as identify, define, quantify, establish, estimate. Write your aims as headlines and follow-up with a description of how you will achieve the aim. Using the example research question above, you might develop the following aim:

Aim 1: Estimate the effect of insecticide-treated mosquito nets for preventing parasitaemia.

You would then go on describe the experiment you plan to conduct as part of this aim, as well as specify a hypothesis.

It’s common to propose multiple aims in a project proposal. Small grant proposals could reasonably have 1 or 2 aims, which might correspond to 1 to 2 studies. Larger proposals might outline 2 to 4 aims. More than this and your project probably is not feasible (or at least will be perceived by reviewers as not feasible). If you do propose multiple aims, make sure that failure in one aim does not prevent you from achieving other aims. For instance, if your first aim is to develop a new process for analyzing biological samples and you fail to achieve this aim, any other aims that depend on this new process are impossible to complete. This makes your entire proposal ride on a reviewer’s assessment of your chances of succeeding on the first aim.

5.3.1 WRITING A CONCEPT NOTE OR SPECIFIC AIMS PAGE

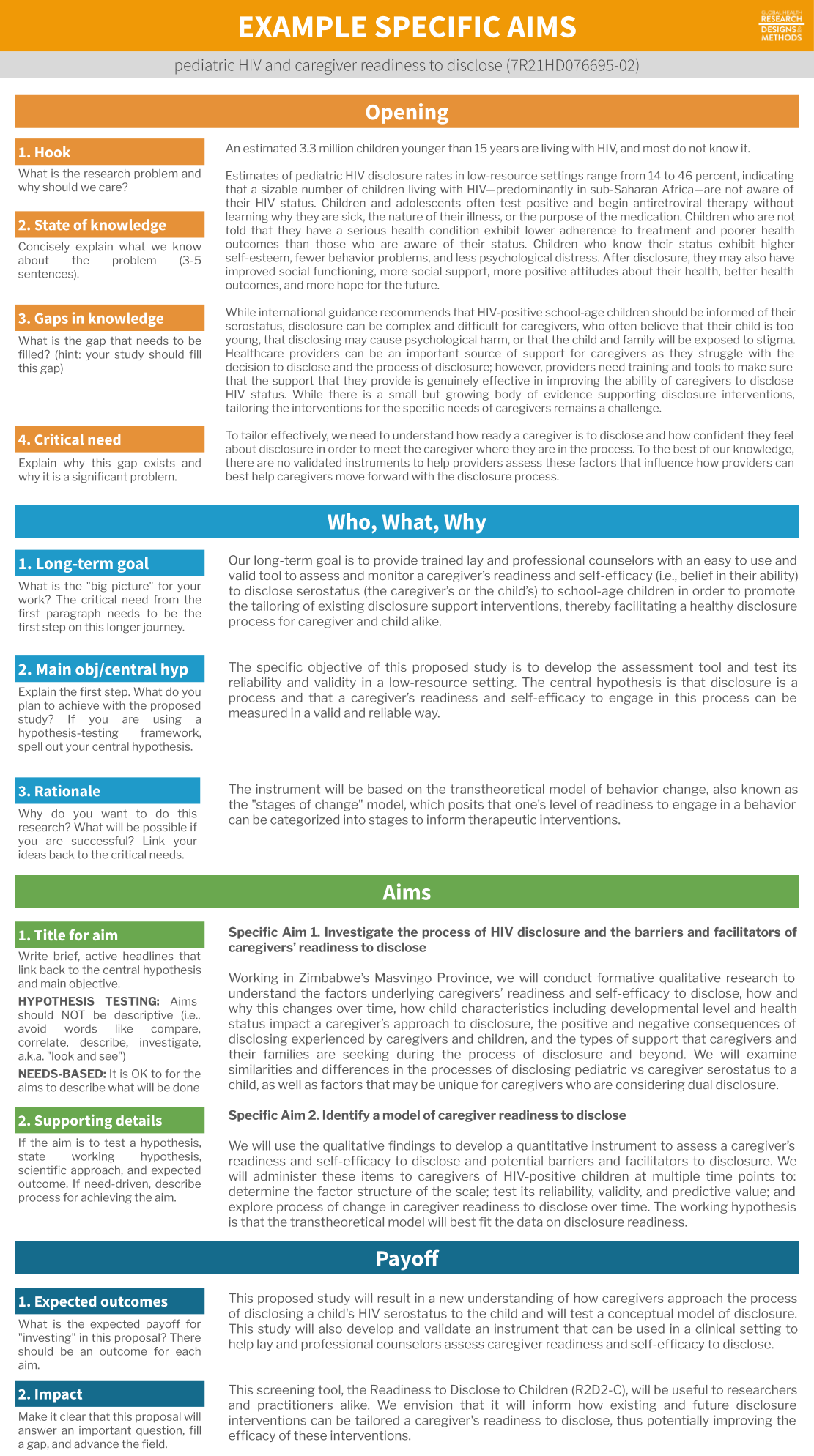

Ideas don’t sell themselves. Before you can change the world, you have to convince collaborators to join your team and funders to believe in the work. One of the best ways to strengthen your pitch is to develop a short concept note. If you are applying for NIH funds, concept notes are known as Specific Aims documents and are always limited to 1 page.

.](images/specificaimsanatomy.png) Figure 5.5: Anatomy of a specific aims page. Source: Inspired by Sneck (2015). To view a full resolution version of this figure, visit https://tinyurl.com/y5s35jo5.

Figure 5.5: Anatomy of a specific aims page. Source: Inspired by Sneck (2015). To view a full resolution version of this figure, visit https://tinyurl.com/y5s35jo5.

Most people will tell you that it pays to follow a known format when writing your Specific Aims page. Grant writing is not creative writing. Your 1-page Specific Aims page is all that some reviewers will read, often while flying at 30,000 feet, cramped in economy with no space for their laptop. Or after a full day’s work and kid bedtimes. You get the picture. Make your reviewer’s job easy by drafting a tight 1-pager following some conventions.

Example specific aims page

Here is an example specific aims page from my work, broken down in a common 4-paragraph format. It’s not a perfect example, but along with others (see here) it might help to guide your work.

In this example, my opening paragraph tries to hook you as the reader, tells you what we know, the gaps in our knowledge, and why filling this gap is a critical need. The next paragraph gives an overview of what I propose to do in the context of my long-term goals. Then come two specific aims with supporting details, followed by a short payoff paragraph that tells people why it makes sense to fund this proposal.

Figure 5.6: Specific aims example from a study of caregiver readiness to disclose a child’s HIV status to the child (7R21HD076695-020).

5.4 Developing hypotheses

Figure 5.7: Can you see yourself in little Jimmy?

?](images/birdposter.jpg)

Chances are you’ve presented a poster like this at a science fair at some point in your life. It depicts the scientific method in action:

- Define a question

- Develop a hypothesis

- Collect data related to this hypothesis

- Analyze the data

- Make conclusions based on the data

- Replicate the study

We call this the hypothetico-deductive model because we develop a hypothesis (a conjecture) and deduce the consequences that should follow from this hypothesis. Stating what we expect based on a hypothesis makes it falsifiable. We can collect data and determine whether the data are consistent with or opposed to this prediction. If the data conflict with the hypothesis, we reject the hypothesis. If the data are consistent with the hypothesis, we say that we have ‘corroborated’ our hypothesis. In this model of hypothesis testing, data never ‘prove’ a hypothesis, and we never ‘accept’ our hypothesis because it’s possible that new data could falsify it.

Daniël Lakens, the creator of the excellent Coursera course, “Improving your statistical inferences”, turned me on to a great book called “Understanding Psychology as a Science” by Dienes (2008). I highly recommend this book (and Lakens’ course) to build a strong foundation in your own philosophy of science.

This characteristic of falsifiability is what the famous philosopher of science Karl Popper said distinguishes science from non-science. Science advances as theories are subjected to tests in which we make specific, falsifiable predictions based on these theories. As we will see, not all tests are ‘severe’ and it’s possible to proceed with a falsificiation-lite approach that has the veneer of science, but is empty at its core.

5.4.1 WHERE DO HYPOTHESES COME FROM?

In the classical view of science, we develop hypotheses out of existing theory, an explanation of some aspect of our world that has been confirmed through repeated, falsifiable tests. We’ll discuss the role of theory in global health in the following chapter, so for now let it suffice to say that theories can be a good source of hypotheses.

Pro tip: If you’re looking to explore or describe some phenomenon and are struggling to identify a testable hypothesis, you can probably stop trying. You might be operating in a hypothesis-generating framework, rather than a hypothesis-testing framework.

It’s also worth noting that a study can include elements of both qualitative and quantitive approaches. We call this mixed methods research, and it’s a topic we’ll cover in Chapter 15.

We can also begin with our observations of the world and generate hypotheses to test. This can be an informal process, or it can take the form of formal qualitative research. Whereas quantitative studies are often deductive—state a hypothesis, deduce the consequences that would be consistent with the hypothesis, and collect data to test this hypothesis—qualitative studies are more often inductive or exploratory. In qualitative research, we make observations about the world and use these observations to describe why or how the world appears to operate the way it does. From these observations we can create testable hypotheses for future studies that, if not rejected, can generate theory.

5.4.2 WHEN ARE HYPOTHESES DEVELOPED?

](images/hark.png) Figure 5.8: The hypothetico-deductive model of the scientific method is short-circuited by a range of questionable research practices (red). HARKing, or hypothesizing after results are known, involves generating a hypothesis from the data and then presenting it as a priori. Source: https://cos.io/rr/

Figure 5.8: The hypothetico-deductive model of the scientific method is short-circuited by a range of questionable research practices (red). HARKing, or hypothesizing after results are known, involves generating a hypothesis from the data and then presenting it as a priori. Source: https://cos.io/rr/

This probably seems like a redundant question because I already listed the order of the scientific method, and I acknowledged that you probably learned this order first hand in primary school. But here’s the thing: scientists sometimes Hypothesize After the Results are Known. This is known as HARKing, and it’s a no no (Kerr 1998).

To be clear: it’s 100% OK to collect data, analyze the results, and generate new ideas (hypotheses) to test in future studies. That’s called exploratory analysis, and if you label it as such everyone is happy.

But it’s not OK—in fact it’s dishonest and a form of fraud—to look at your data, develop hypotheses that conform to the data, and pass your new ideas off as a priori hypotheses that you tested. That’s lying. And it’s not science. You can’t falsify a hypothesis that you create after looking at the data.

I’m going to refrain from hitting you with some depressive statistics about the state of science today. It’s only Chapter 5. I’m still working on building your enthusiasm for research. We’ll revisit research sins like HARKing in Chapter 18, along with strategies for preventing such bad behavior.

5.5 The lifecycle of a research question

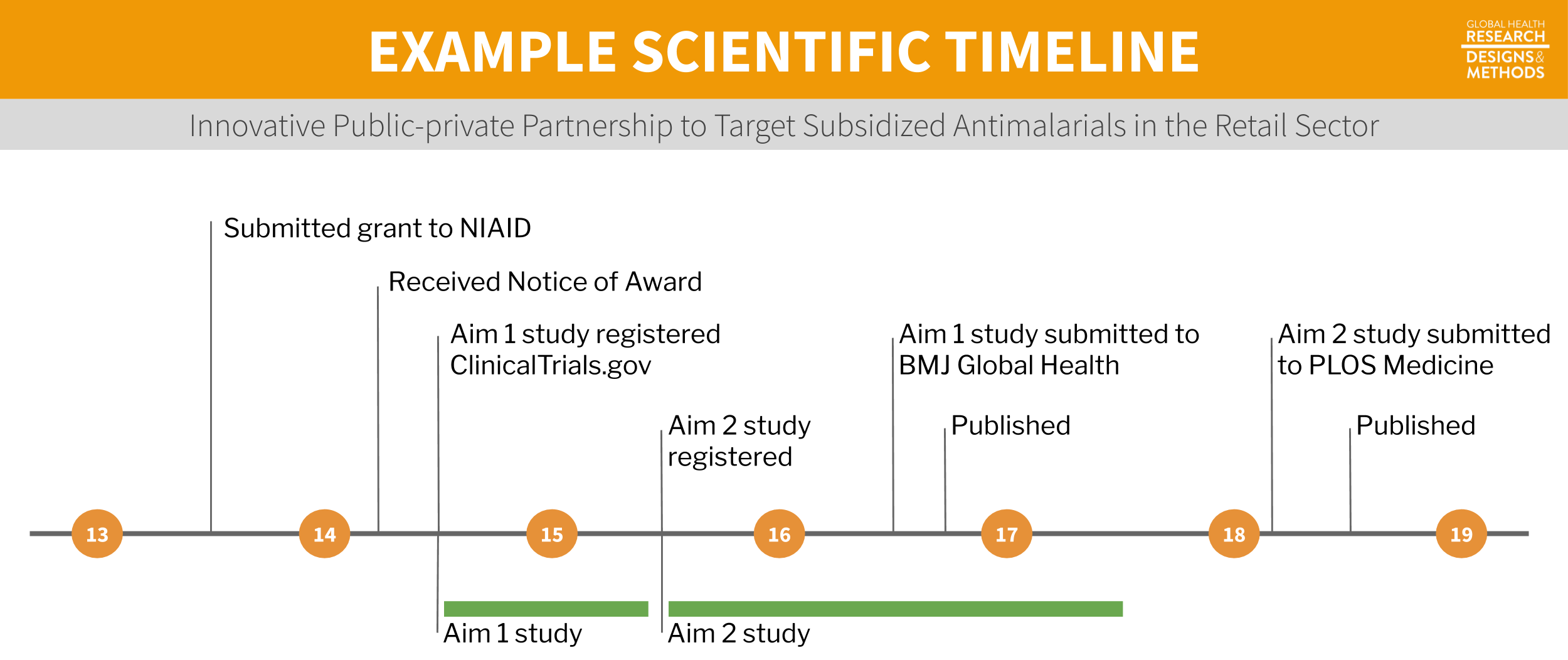

Research questions have a way of sticking with us. When you read an article published in your favorite journal, chances are that the authors have been living and breathing the motiving research question for years. To make this point and demonstrate the lifecycle of a research question, I will walk you through an example from Dr. Wendy O’Meara’s research group on subsidies for malaria testing and treatment.

Figure 5.9: Example timeline.

5.5.1 GRANT PROPOSAL

.](images/omearaaims.png) Figure 5.10: Specific aims page from the O’Meara lab. For a full resolution version, visit https://tinyurl.com/y2k6jsa4.

Figure 5.10: Specific aims page from the O’Meara lab. For a full resolution version, visit https://tinyurl.com/y2k6jsa4.

Once you have your core team in place, it’s time to get funding to make your idea a reality. In the O’Meara lab’s example, they developed a proposal in response to this Funding Opportunity Announcement (FOA) from the NIH:

The purpose of this dissemination and implementation research funding opportunity announcement (FOA) is to support innovative approaches to identifying, understanding, and overcoming barriers to the adoption, adaptation, integration, scale-up and sustainability of evidence-based interventions, tools, policies, and guidelines. Conversely, there may be a benefit in understanding circumstances that create a need to “de-implement” or reduce the use of strategies and procedures that are not evidence-based, have been prematurely widely adopted, or are harmful or wasteful.

The NIH offers different sized research grants. The R03 is the smallest grant with $100,000 in direct costs over 2 years. This mechanism is good for pilot or feasibility studies. The R21 comes with up to $275,000 in direct costs over 2 years. Researchers apply for an R21 when they want to test a new idea with some preliminary evidence. The R01 is the largest grant with an unlimited budget for work to be carried out over 5 years.

They directed their proposal to the National Institute of Allergy and Infectious Diseases (NIAID), one of the many NIH institutes participating in this funding opportunity. The team proposed two aims as part of an R01 grant:

Aim 1: Estimate the effect of antimalarial subsidy level on ACT purchase and adherence to RDT results. In the first phase of the project, we will test the effect of a range of subsidy levels on the demand for ACTs, and also test how the response to subsidy varies with or without information from diagnostic testing.

Aim 2: Evaluate the public health impact of targeted antimalarials subsidies through scale-up. In the second phase, we will determine the community-wide effects of targeting the antimalarial subsidy in both a high and a low malaria transmission region of Kenya through a partnership between CHWs and the private retail sector.

In 2014 when this proposal was funded, the payline for new R01s at NIAID was the 13th percentile. That means the O’Meara lab’s proposal scored within the top 13% of all proposals submitted. Think of how crushed you would be to have a proposal sitting at the 14th percentile!

5.5.2 FORMATIVE WORK, AIM 1

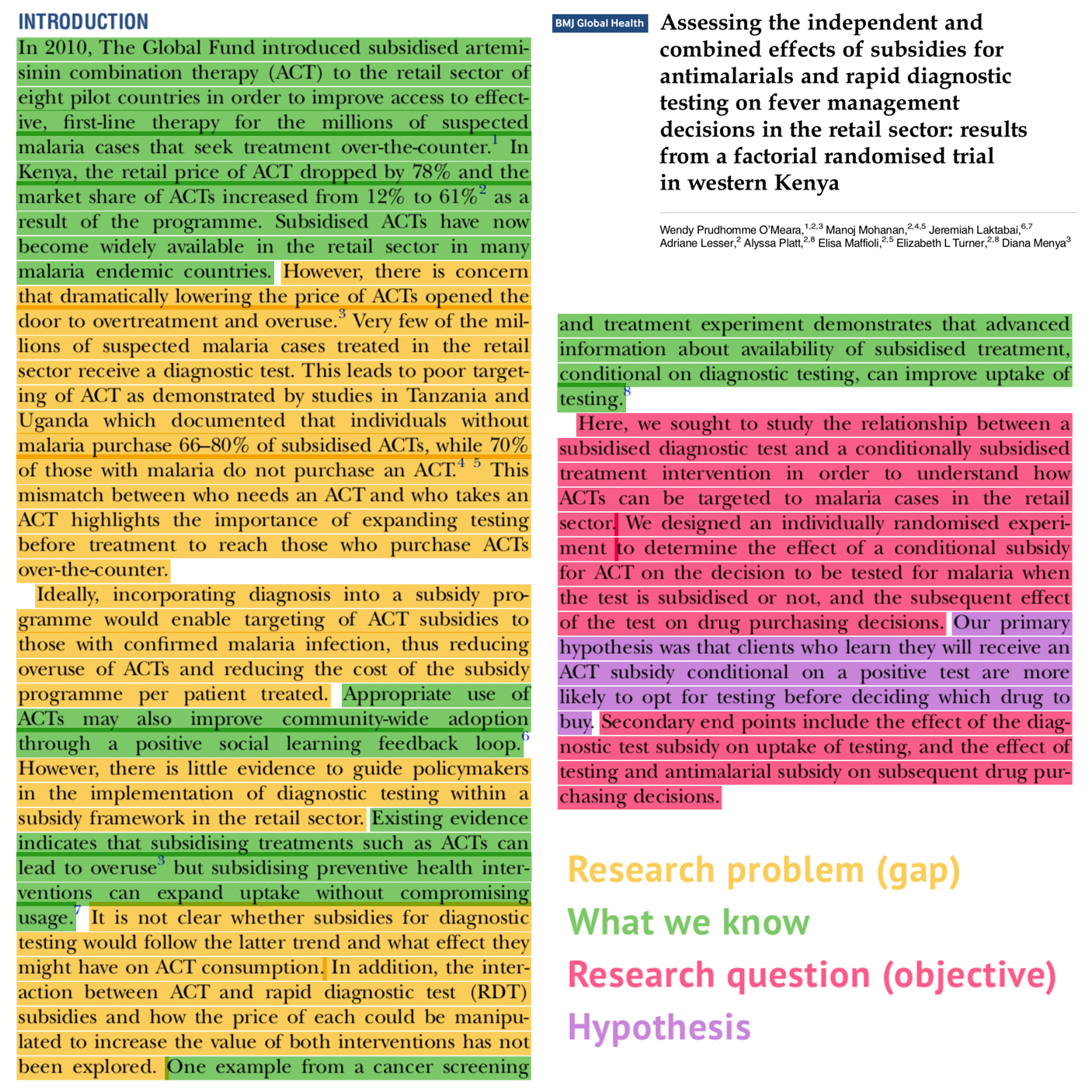

The O’Meara lab conducted two studies as part of this project. The first was a formative study to determine the optimal subsidy level for malaria treatment. Let’s use the paper they published on this Aim 1 work to examine how research problems, questions, and hypotheses come together in the Introduction of a scientific manuscript. Take a moment and download this article in BMJ Global Health by O’Meara et al. (2016). I’ve highlighted the authors’ descriptions of the research problem, what is known about the problem, the research question, and the hypothesis.

Figure 5.11: Example Introduction section published in BMJ Global Health. Source: O’Meara et al. (2016)

The research problem is that we don’t know how to combine diagnostic testing for malaria with drug subsidy programs in order to expand access to first-line treatments while ensuring that the medication is not overused by individuals without malaria. As noted in the Abstract:

There is an urgent need to understand how to improve targeting of artemisinin combination therapy (ACT) to patients with confirmed malaria infection, including subsidised ACTs sold over-the-counter.

The authors framed the objective of the study as follows:

We sought to study the relationship between a subsidised diagnostic test and a conditionally subsidised treatment intervention in order to understand how ACTs can be targeted to malaria cases in the retail sector. We designed an individually randomised experiment to determine the effect of a conditional subsidy for ACT on the decision to be tested for malaria when the test is subsidised or not, and the subsequent effect of the test on drug purchasing decisions.

While most scientific papers follow the pattern of describing the research problem and outlining the objectives of the paper, you will find that it’s relatively uncommon, at least in the health sciences, to explicitly state the research question as a question. If you look at recent issues of a journal like The Lancet Global Health, you’ll also find that many articles do not even state explicit hypotheses.

We can use PICO to construct the implied research question:

| Population | individuals (>1 year) in Bungoma County, Kenya with untreated symptoms of malaria |

| Intervention | conditional subsidy for ACT |

| Comparison | no subsidy |

| Outcome | uptake of malaria testing and rational use of ACTs |

Among individuals (>1 year) in Bungoma County, Kenya, does offering a subsidy for ACT conditional on testing positive for malaria increase the uptake of malaria testing and the rational use of ACTs compared to no subsidy?

If you’re coming from a discipline like economics, you might wonder if I forgot to paste the full Introduction of O’Meara et al. (2016). I did not. This is it. It’s common in the health sciences to publish very brief Introduction sections.

For comparison, here’s an example Introduction from Blattman, Jamison, and Sheridan (2017) published in American Economic Review. The first thing you notice is the difference in length. The complete typeset paper is 42 pages, compared to only 10 pages for the above example published in BMJ Global Health. The AER article is gated, but if you download the pre-print and read the Introduction, you’ll also get a sense for how economists structure scientific papers differently.

Figure 5.12: Example Introduction section published in American Economic Review. Source: Blattman, Jamison, and Sheridan (2017)

5.5.3 PUBLIC HEALTH IMPACT, AIM 2

With the formative work completed, the O’Meara lab moved on to the second aim to evaluate the public health impact of targeted antimalarials subsidies through scale-up. They designed and conducted a stratified cluster-randomised controlled trial in Western Kenya with 32 communities assigned to get usual care or free malaria testing and a partially subsidized voucher to purchase ACTs if testing positive.

Study protocol

As we’ll discuss in Chapter 18, it is becoming more common to publish a study protocol prior to starting data collection (or at least prior to viewing the data) that outlines your research question, hypothesis, study design, data collection methods, and analysis plan. Download the O’Meara lab’s protocol (Laktabai et al. 2017) from BMJ Open and notice how a study protocol, like a grant, uses future tense to describe what will be done.

Figure 5.13: Aim 2 study protocol. Source: Laktabai et al. (2017)

Trial registration

Regulatory bodies require that drug trials be prospectively registered with a database like ClinicalTrials.gov. Registration of non-drug trials is not mandatory in the same way, but most journals require it as a condition of publication of clinical trials.4 According to the NIH, a clinical trial is defined as, “A research study in which one or more human subjects are prospectively assigned to one or more interventions (which may include placebo or other control) to evaluate the effects of those interventions on health-related biomedical or behavioral outcomes.” Go here to see if your study is a clinical trial. There are many registries to choose from, but ClinicalTrials.gov is the oldest and most widely used. This is where the O’Meara lab registered their study for Aim 2 (NCT02461628).

Figure 5.14: Aim 2 trial registration. Source: ClinicalTrials.gov, https://tinyurl.com/yxundeds.

.](images/omearaclinicaltrials.png)

Published paper

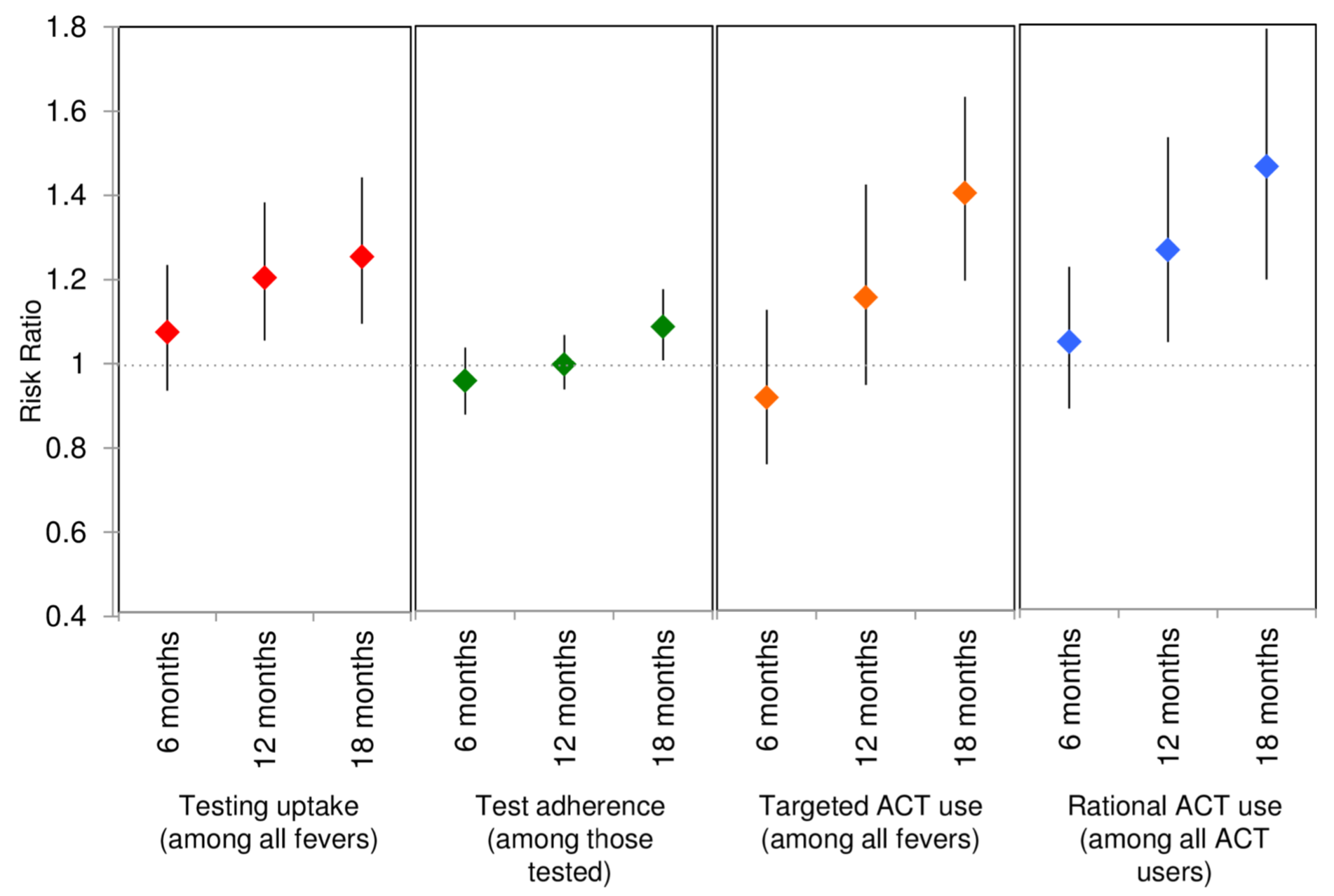

Figure 5.15: Adjusted modeled RRs and 95% CIs for the primary outcome of uptake of testing and 3 composite outcomes. Source: O’Meara et al. (2018).

Figure 5.15: Adjusted modeled RRs and 95% CIs for the primary outcome of uptake of testing and 3 composite outcomes. Source: O’Meara et al. (2018).

Approximately 5 years after seeking funding to estimate the impact of targeted subsidies for antimalarials in the retail sector, O’Meara et al. (2018) published the results of their Aim 2 study. They reported that 18 months after introducing the program to the intervention communities, the proportion of people opting for testing prior to treatment increased by 25%, and the proportion of antimalarials dispensed to true cases of malaria improved by 40% relative to the control communities. The authors conclude:

In summary, we demonstrate that it is possible to target ACT subsidies to diagnostically confirmed malaria cases. Allocation of subsidy dollars between testing and treatment for test- positive individuals may present a better use of programmatic resources than unconditional private sector subsidies.

5.6 The Takeaway

The first step in asking a good research question is knowing what type of question you want to ask. Some questions are descriptive (e.g., What is the prevalence of condom use among adolescent males in Nigeria?). Other questions are predictive or relational (e.g., To what degree are wealth and smartphone ownership correlated?). But a lot of questions that drive policy are causal in nature (e.g., What is the impact of text message reminders on medication adherence?). Once you know what type of question you want to ask, a mnemonic like PICO or FINER can guide how you construct the question. The next step is to turn your research question into a study proposal anchored in a few specific aims that represent the work products you will complete within a given project period. Crafting a tight concept note or Specific Aims page early on can help clarify your thinking, recruit colleagues, and secure funding. Depending on your aims, you might outline specific hypotheses to test. By registering these hypotheses along with your aims and procedures, you will establish a public record and demonstrate your commitment to practicing good science.

Page built: 2020-08-09